| ||||||||

|

Home About_Me About_My_Mentor About_My_Project Links My_Journal |

My Project I will be working, along with Philip, on an arbitrary precision arithmetic library that is optimized for the CUDA platform. Introduction So, it turns out that the way we think of numbers and the way a computer deals with numbers are very different. From a mathematical sense, we are most interested in helping scientists deal with real numbers, which include whole numbers (1, 2, 5, 10), fractional numbers (1.2, 3.2432, 10.5), and their negatives. We can see that some real numbers, say one-third for example, have an array of digits that repeat: 1/3 = 0.333333333... Now, we could represent numbers as a fraction of two integers (1 and 3), but that would leave out irrational numbers, a subset of real numbers. These numbers have a decimal representation that does not repeat, which puts us back at square one (not to mention that even computer integer types are also limited in size). Floating-Point Numbers So, what did scientists do? Well, they came up with a numeric type called floating-point numbers (or "floats" for short). They store fractional quantities using a specific format. While the details of this format are not important to understand the problem we are trying to solve, it is enough to understand that this type can only store a finite number of significant digits, after which the quantity being represented is "chopped", in which the additional digits are discarded, leaving only an approximation of the original quantity:

1/3 = 0.333333333333333314829616256247

The approximation is good up to around the 17th digit

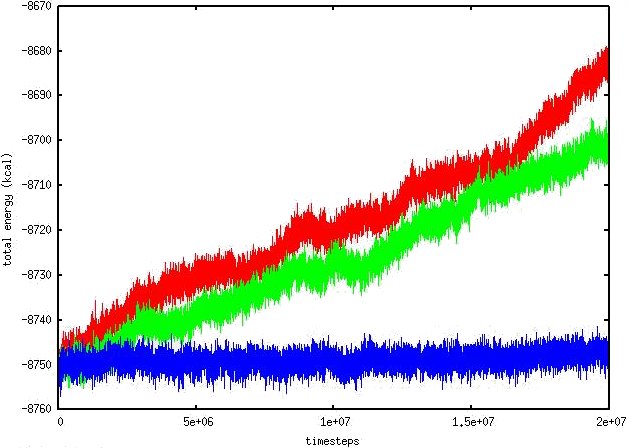

No doubt, some of you may read this and say "Well, you still have 16 digits of accuracy." This is true, and for a large majority of applications, this is more than sufficient. Some scientific applications, however, render this small discretion unacceptable (and the floating-point number useless). One such example is CHARMM, a package for macromolecular simulations. An ultimate goal of the simulations we run are a spatial resolution of atomic scale spread over large length scales and a temporal resolution of microseconds over large time scales (effectively, continuum physics with atomic detail). A side-effect of this great ambition is the incredible demand for high-precision numerics, but simply including more digits is not enough. High-precision arithmetic also comes at a high-performance hit, so an ideal numeric type would be able to dynamically adjust precision based on certain conditions, maintaining the constantly changing balance of accuracy and performance required by the scientist. Enter the GPU Before GPU's, we have been practically stuck on how much we can give and take with regards to performance. Multiple CPU's were as good as it got, but they never seemed to be fast enough, and usually had distributed memory, requiring lots of copying and message-passing, resulting in losses in efficiency for all but the most coarse computations. The use of GPU's for numeric computations represents an immense jump in the capability of high-performance machinery and a significant step towards the goals of our simulations. But, we have another problem. The IEEE standard defines the format for storing floats as well as the conditions for various floating-point operations. These include addition, subtraction, multiplication, division, and taking the square root (sqrt). Among these requirements is that an operation on the result of its inverse should return the original float ( (A+B)-B, (A*B)/B, and sqrt(A*A) should all return A as the result). As it turns out, some of the single-precision floating point arithmetic, which benefit from many performance optimizations from the GPU hardware, are not IEEE compliant. These kinds of operations result in small deviations from the original float -- a type of numerical degeneracy we call "drifting".  Figure 1 -- An example of drifting in a constant energy molecular dynamics simulation.

| |||||||