My REU took place from early June to the end of July 2016

My first week at UNM I made it my purpose to explore the campus because it was one of the larger campuses I had been to. One of the first things I noticed about Albuquerque, and especially the campus, is the adobe style that it had.

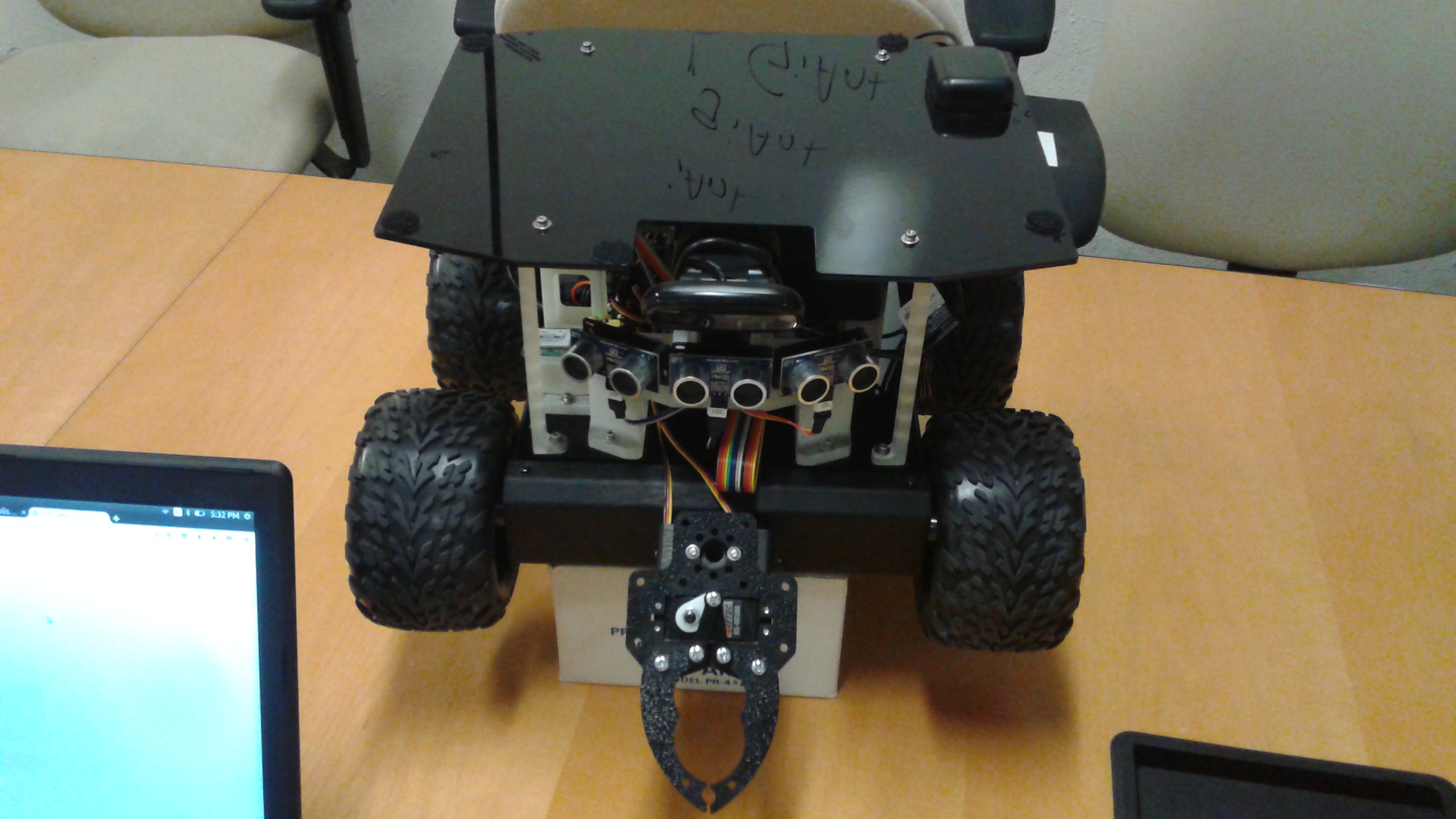

I was nervous going in to the lab for the first time, but Jake Nichol quickly took me right in made me feel right at home. My first task was to build a rover, which was something I had never even thought about doing. Luckily, Jake had designed an assembly manual for the rover having worked closely with the design and build components of the rover.

I worked on the rover for a few days and took this as an opportunity to ask questions about component on the rover to understand how it worked. After interrogating Dr. Joshua Hecker and Jake with all of my questions, I finally had a comprehensive understanding of what was going on physically with the rover and how it interfaced with the code.

The lab was in the process of installing grippers on to the rovers to pick up objects. At this time the claw could only move the claw remotely. I wanted to understand how the claw moved and worked. After playing around with the rovers for a bit and understanding how they worked, I asked what the next step was. The goal was to get the rovers to autonomously pick up blocks with the new claw prototypes, which I eagerly volunteered for.

By this time I had become comfortable with the lab and started to work much more independently. I had a bit of experience with Robot Operating System (ROS) and Linux, so I also used some of this time helping the other interns since we were tackling the same problem. I was already familiar with the code base that the rovers used having worked with the simulated rovers previously, so I picked up the code fairly quickly.

The algorithm I wanted to implement was simple. My understanding of the problem is this:

- The rover detects an AprilTag Block visually.

- This tag is represented on a 2D image and it has 5 coordinate values for it. The center, and the four corners.

- The claw for the rover also has to have a coordinate value to. Specifically, we need to align the x-component of the center of the claw with the x-component of the coordinate for the tag.

- Once they are aligned, the rover simply moves towards the rover.

- The camera that the rover uses to see has a blind spot as soon as it gets close enough to the block, so after a certain distance the rover will have to rely on a fully preprogrammed algorithm to pick up the block.

Simple enough. Well, not really. The first task was researching how to extract the coordinates required. Josh hinted at AprilTag package that they had used to acquire the tag ID for detection. I spent the day reading through the code base and extracting the data I wanted. I spent the rest of the week implementing the data from the AprilTag package into ROS.

I believe around this time I finally got to meet Dr. Melanie Moses in person. She was out of town for a while, and she came back so we could attend the Robotics Science and Systems (RSS) conference at the University of Michigan. The interns that were attending had to create a poster to present at the NASA Swarmathon workshop at RSS. I partnered up with Kirubel Tadesse, a student from Jackon State University in Mississippi.

With the help of people in our lab we finished our poster and headed to Michigan. All I can say about Ann Arbor is that it was beautiful.

The 5 days I spent at the RSS conference were transformative in the decision making process for my future. At that conference I met so many wonderful people, including Nancy Amato. She took time out of her day to connect me to a professor at UCM to compete in the Swarmathon competition the following year. I was ecstatic. I met many students, researchers and experts in the field of robotics and I was a sponge for the next few days. It was an amazing experience being able to talk to anyone I wanted to. The fancy dinners, drinks and really the whole experience was something I don't think I could forget.

Before this conference I was planning on working as a software developer for some company, but this turned the idea of graduate school and research into something I could really see myself doing in the future.

We had just got back to UNM and were ready to continue where we left off. I had already coded the coordinate system for the AprilTags and after speaking with Matthew Fricke, he mentioned something about tracking the tags visually. I decided that since I was working on a similar project, I could implement that on the way. I asked how he wanted to do it and after speaking with Josh I had a game plan in mind.

The approach:

- The rover detects tag and gets the 4 pixel coordinates for the corners

- The rover will receive an image of the camera

- The rover will then draw a line connect the 4 corners

- Finally, it will display this on the GUI

- Repeat, frame by frame so it shows it live on video

- Do this for 1 tag,then for all tags on the screen

I naively thought I could simply draw a square at first, but this wasn't the case. Objects get distorted when a picture of them is taken and a homography matrix is used to scale it back to its normal dimensions. After realizing this I used a generic polygon drawer around the tags to account for any possible weird shapes. I spent a week implementing that, and the final task was to do this for many on the screen, which took another week. I had a presentation coming up and finish this just in time.

The tracking system took longer to implement than I had originally thought, but I was happy. The next step was to actually get the rover to pick up a block. The first approach, was to utilize the homography matrix and transform it into useful information. I spent a few days on this learning the math behind this, but then Josh came to the rescue. He let me know that apparently ROS had a package for AprilTags and I quickly gave it a shot.

The first step was to calibrate the webcams that the rovers had. That basically means to run some diagnostics on the cameras to acquire it's specifications in a way that the software could understand. All the rovers had the same camera, so this only had to be done once to get it to work for all of them.

The next step was to have ROS read in this data through a file and then it could spit out a calculated position of any tag it detects. I wasn't expecting any miracles from this package, but it came through a lot nicer than we had thought.

The data that came in was useful because it gave us a position relative to the rover. Without going too much into detail and after many hours pent on calculations, we finally transformed that data into an actual position the rover could use! This process was much faster, but much more tedious that tracking the tags.

The process:

- Detect tag

- Give us data

- Transform the data

- Move to the calculated position

- Pick up block

My plan was to use this along with the camera to center itself, but our calculations were so accurate after tweaking that the rover could move to the block after seeing it only once accurate enough to start picking it up. Although if it did see the block again, it would update the position to be even more accurate. I spent so much time focusing on moving the rover to the position I didn't have code for the claw yet, so I quickly coded that and we had our first rudimentary algorithm to pick up a block. This was an amazing feeling and I ended up recording it and emailing it out. Here's the footage:

This was my final week in the lab and I took it really easy to be honest. The next step was to use a PID controller to have the rover stop overshooting with the turning as it got closer. The problem with my code was that I was manually changing the velocity values for the rover depending on the texture for the floor and the PID controller would automatically adjust the velocity from any possible situation.

I researched PID controllers for a bit, but since it was another large task, I decided to learn it mainly for fun. I spent a lot of time socializing with friends during this time and really enjoying myself. I have no regrets with this decision.

All in all, my time spent in New Mexico is a time I will never forget. I could not have asked for a better experience. The friends I made could not have been better and the sights were stunning. Although at one point I was camping out and stepped out in the middle of the night in fear of some wild animal attacking me since it was pitch black, until I saw the really beautiful starry sky at night that you could only see in a place like New Mexico.