This week was a short week, as Independence Day was on Friday! Our REU group went to the festival on the river front, saw a fireworks show, ate ice cream, and listened to some local bands playing at the festival. It’s fun to see how festive the city can be.

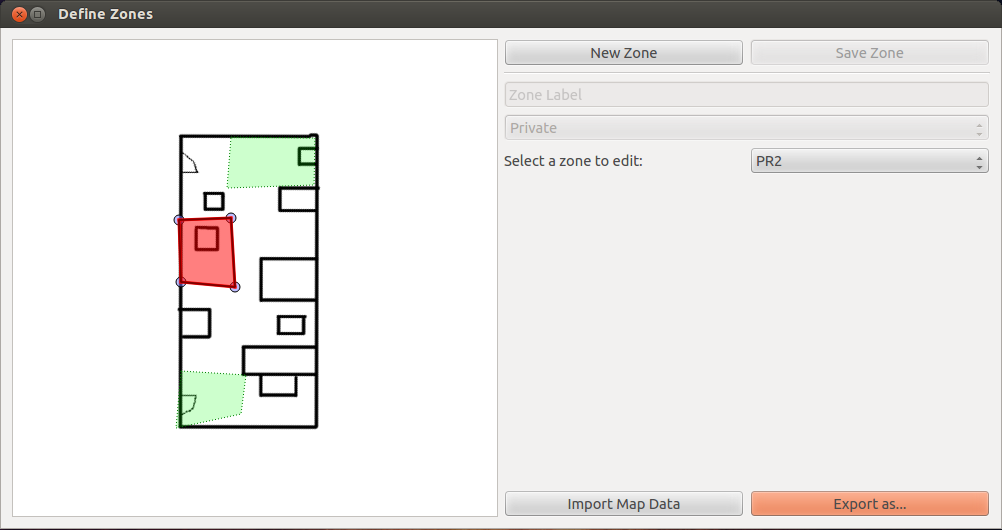

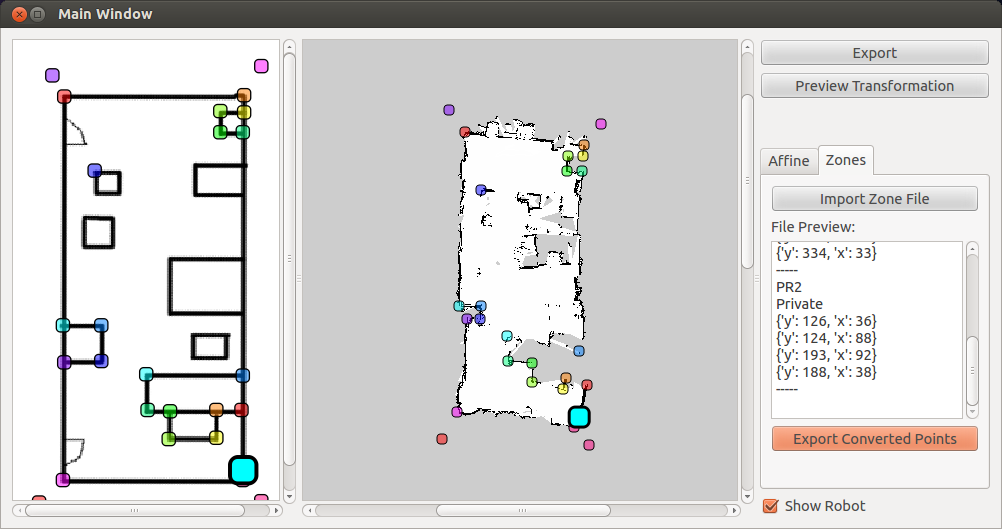

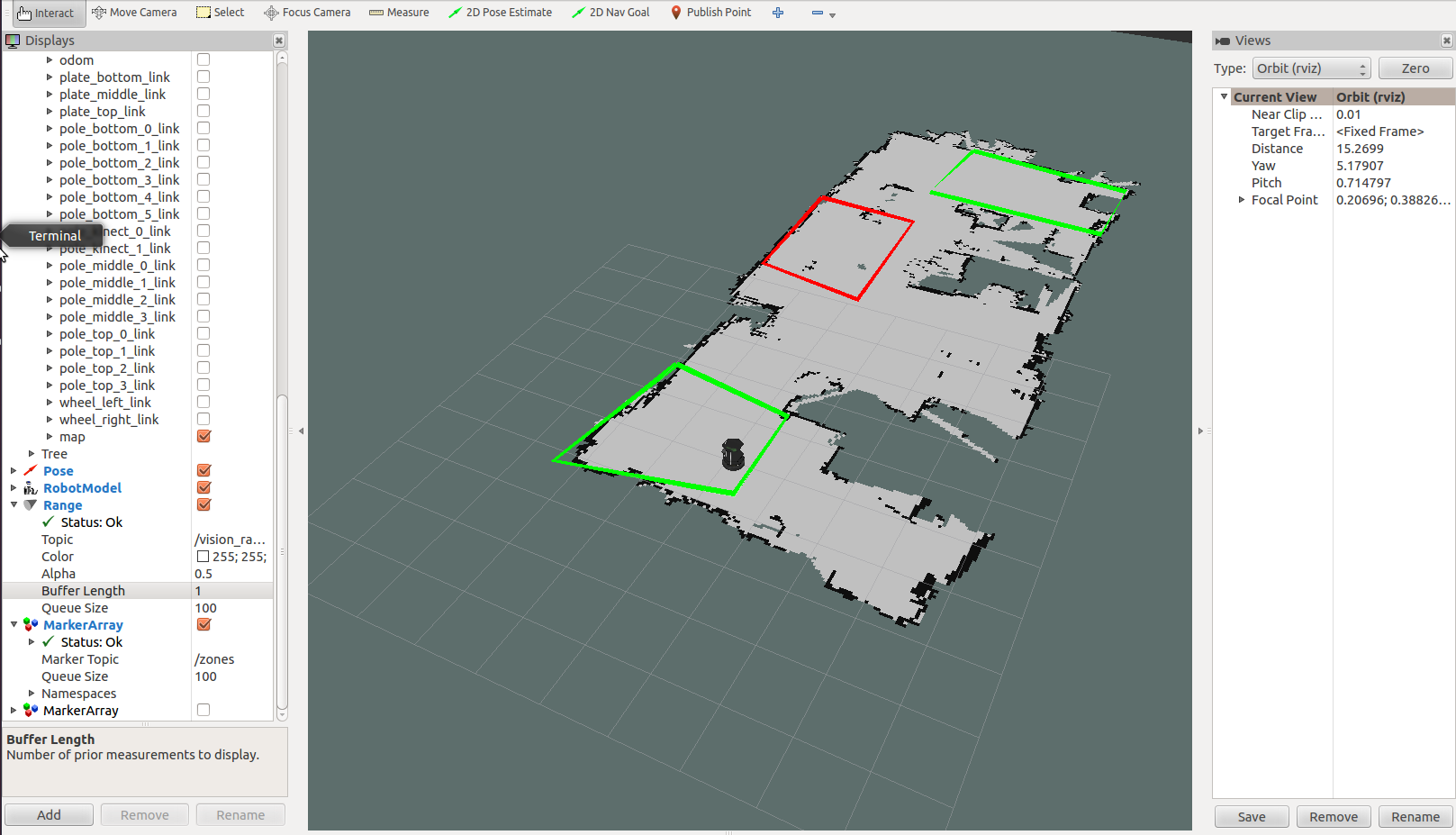

Anyway, as far as my research goes, I have made more progress. At the beginning of the week, I continued to do research about ways to edit the map as well as handling different kinds of mouse events in the GUI. KnowRob is an interesting package that focuses on semantic mapping, and even though it is probably beyond the scope of the project, I think that the expansion of that package into privacy interfaces could greatly increase usability.

I went into the library and made a map of the space we plan to use in the user study, and I also made a map of the lab so we can continue to do tests. Mapping does take a long time to produce a high quality version, but I think that this pays off in the end (we won’t have to do it again if I don’t accidentally delete the file).

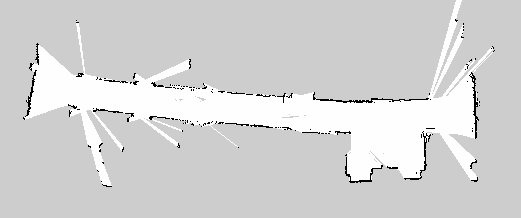

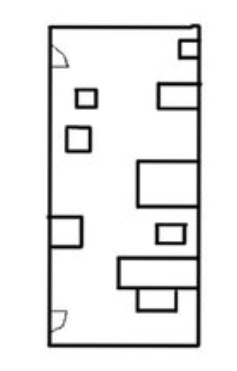

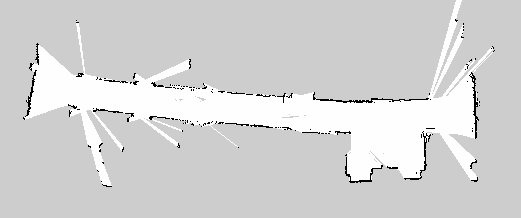

Above is the map file of a hallway in the library. It has an open area with elevators as well as study rooms along the hall. The points in which the map “spikes” are when the laser scan shines through the glass on the doors and into the study rooms. Most of the doors were closed, so we could not drive inside and map each of the study rooms.

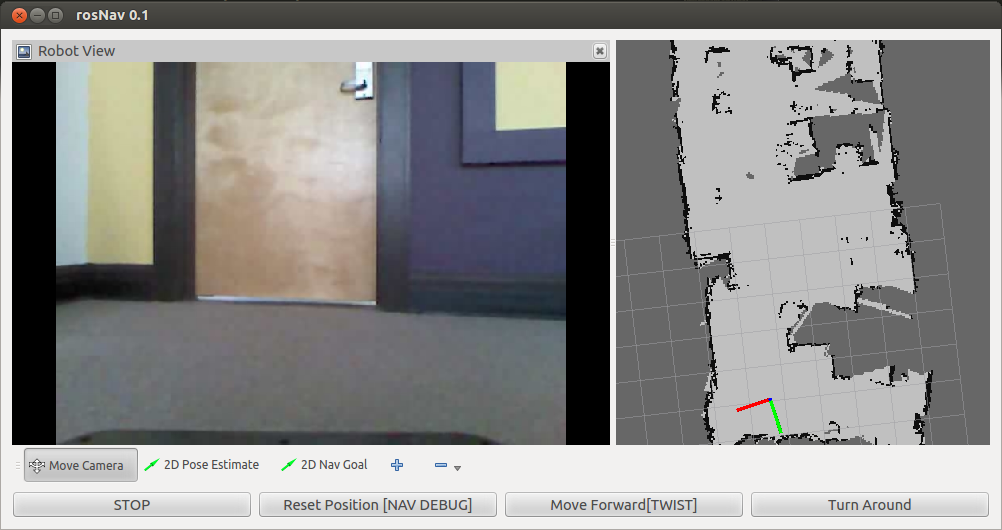

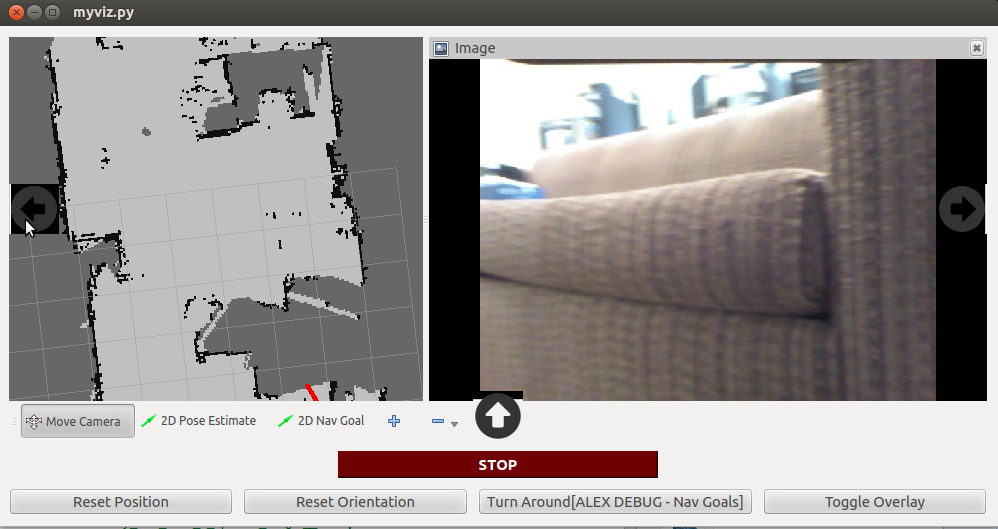

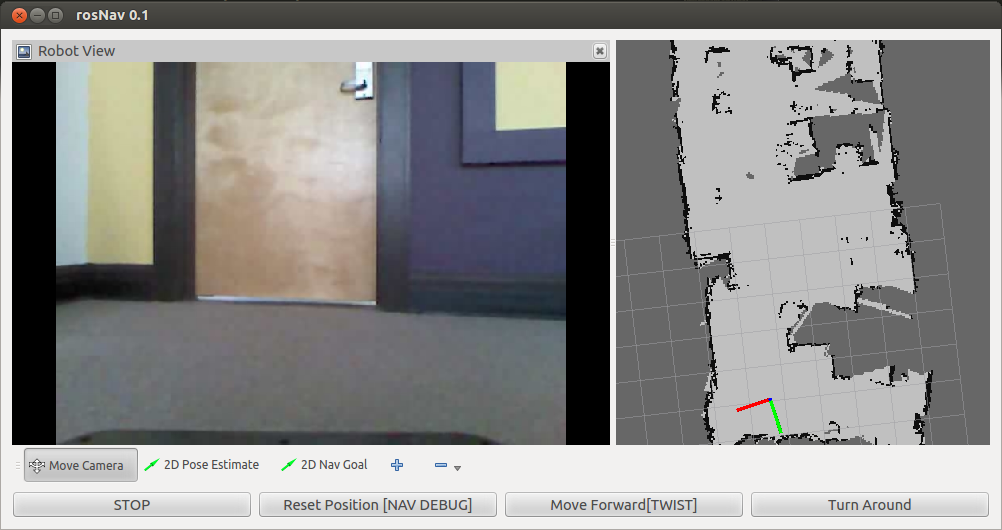

With the user interface, I was able to pull in map data (now that I had a map) and also mark the robot’s location within the map. We need to manually pass in a start frame so the robot knows where it is (it cannot initially orient itself without starting coordinates). I have a strange bug in that sometimes it does not read in the configuration or map file and produces a blank screen. I’m not sure what is causing this, and it is incredibly frustrating because it seems to happen entirely sporadically. I have posted this problem on ROS Answers, so hopefully I will find out something soon.

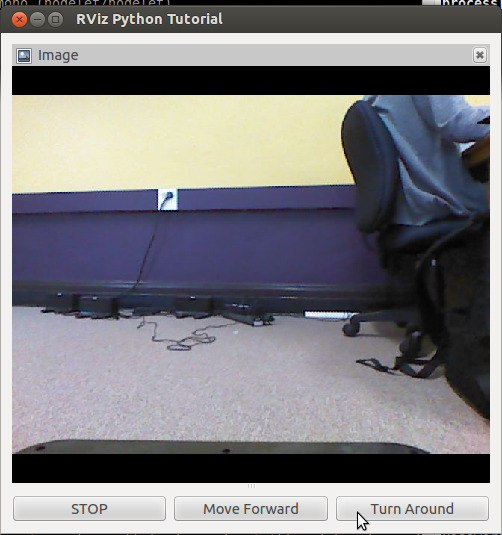

Working with navigation buttons, there were also some quirks that I needed to work through. For example, when you click a button to move, you expect that holding the button would continue movement until it was released. At first, it would only move when the click was completed, and you would have to click multiple times, moving a small distance each time. We reimplemented this functionality to our “move forward” button after a few different approaches. Thus far, movement is very smooth. However, there is still an issue where the loop for the move function causes the rest of the image to lock up (the video freezes during the loop). I will either need to implement some sort of break to let the video catch up, or create a separate thread/process for the move function to run on independently of other GUI functionality.

So far, I feel like I have learned and accomplished a lot. A few weeks ago I was stumped by how to even get an image from the robot to the screen, and now I’m building even higher.

I also was able to make a very basic UI that can stream data live from the robot’s kinect camera. It takes data from

I also was able to make a very basic UI that can stream data live from the robot’s kinect camera. It takes data from