Welcome to my project journal! Here I will chronicle everything that goes on in my research here at the Parasol lab. I will also probably write a few things about life in general at College Station, and what I have been up to.

Week One

I arrived in College Station on Sunday evening, and found out where I would live for the next 10 weeks. After having been away from any temperature over about 70⁰F for so long, I thought it was quite warm!

On Monday I spent the morning mostly going over forms, meeting people, and finding out where everything is. In the afternoon I started to read up on STAPL to become familiar with what I would be working on. One of the papers I read was "Design for Interoperability in STAPL" by Antal A. Buss, Timmie G. Smith, Gabriel Tanase, Nathan L. Thomas, Mauro Bianco, Nancy M. Amato and Lawrence Rauchwerger. It gave me a bit of an introduction to what I would be working with. I also attended a meeting of the STAPL group, and it was very interesting to hear what everyone was working on and the kinds of problems they were encountering and how they planned to solve them.

I spent most of the week waiting for my account at the Supercomputing Facility to be approved so that I could use the Hydra cluster (not to be confused with this Hydra cluster). Once I received my account, I was able to start testing the code we were working on for multiplying matrices stored column-wise and row-wise. So far it appears that this will be an interesting problem! We spent the first day or so without understanding just how many changes were needed from the case that they provided for us, to the other seven cases we are trying to implement. On Friday afternoon, we discussed it and figured out what we needed to do, so I think now we are on the right track.

Besides working on our matrices, we also have lunchtime brown-bag lectures to attend once a week. This week's lecture was about writing academic papers as a computer scientist. I thought it was pretty interesting and a good choice of topics -- I am not a particularly strong writer, and to succeed, one must know how to write a good paper.

Week Two

I spent my second week here in College Station thinking about matrices. Although the algorithms determining which piece of each matrix should be multiplied and where the result should be stored are simple (at least in six of the eight cases), some of the details in our implementation have been a bit more complicated. For example, while the first case we wrote (for when all three matrices were stored row-wise) worked without too much trouble, the second one made me want to tear my hair out at times. We could tell that it was mostly working, but at the end, the result we returned was a previous version of the results matrix. We spent all of Wednesday trying to figure this out, with the help of a couple of the graduate students. Eventually we found out the problem had to do with synchronization, and learned the preferred way of fixing it. It was frustrating to spend all day unable to solve what was really a fairly simple problem, but at least we did become familiar with using totalview, a utility for debugging parallel code.

Once we had a several of the cases working, we began to run tests on them to see how they compared with the case we were given. Our tests showed that while ours showed the same sort of trend, the performance was not quite as good as in the implementation for the given case. In particular, when we were rotating one of the matrices, it took 25% longer in our case than the given one, even though the same function was used and the same amount of data rotated. We plan to spend next week in bringing the performance of our part up, as well as implementing at least the three easier cases when C is stored column-wise.

The brownbag lunch this week was about what to look for when choosing a grad school, and some of the reasons that one might want to attend. Grad school always seemed so far away, far enough away that I didn't need to think about it yet, but now I'm finding that I need to make some decisions soon.

This weekend, I plan to figure out the bus system! It seems like everything in College Station is very far apart, and it is warm enough that walking several miles to the store does not sound all that appealing.

Week Three

This week we implemented the remaining three easy cases for matrix multiply (with the overall partition strategies of CCC, CRC, and RCC). We ran some tests and were happy to see that our implementations performed comparably to the one we were given. You can see our graph on Adam's journal.

We then made the unfortunate discovery that we had been working on the wrong problem. We were working on the cases when the matrix was partitioned into blocks row-wise or column-wise, while what we were really supposed to have been solving were the cases when the matrix was partitioned into blocks where A was row-wise, B column-wise, and C row-wise, but where the actual data was stored within those partitions in row or column ordering. It was somewhat depressing to find out that we had been doing everything wrong, but on the bright side it turned out that the problems we had been solving were still useful. Besides, the problems we were supposed to be solving were actually considerably easier, so they should not take very much time. The obstacle now is waiting for there to be support in the code we are working with; right now we have worked out how dgemm should be called, but we are not able to test it on an actual matrix. But hopefully the changes will be committed soon, and we will be able to see if our implementations will work.

This week's brown bag lunch was a presentation by some of the faculty. Dr Andruid Kerne started out by describing some of the applications of the work in the Interface Ecology Lab. Dr Tracy Hammond gave a pretty cool demonstration of what they are working on in the Sketch Recognition Lab -- you can draw something that looks vaguely like a ramp and something that looks sort of like a car (just a box with a couple of wheels) and tell the program there is gravity, then watch it come to life and the car roll down the ramp. All from a few simple lines. Dr Dezhen Song talked about his research; highlights included Collaborative Observatories for Natural Environments, which for example uses cameras to try and identify rare birds in the wilderness, and the Ghostrider Robot, a motorcycle without a rider that did some pretty amazing things. We watched a video of it and thought it was really cool what it could do. Finally, we heard from Professor Ricardo Gutierrez-Osuna of the PRISM (Pattern Recognition and Intelligent Sensor Machines) labs and he talked about their projects, including one where they were modifying audio of the speech of someone with an accent so that it was a standard American accent. Very interesting stuff! I'm almost disappointed that I'm just working on matrix multiplication, which is actually interesting and important, but lacks the coolness factor of something like a robot motorcycle. On the other hand, I don't have to worry about things like a typo leading to the motorcycle taking a swim!

Week Four

This past week we implemented the two last cases, RRC and CCR. The best way we could find to do that was to transpose one of the matrices first, which currently leads to these cases being very, very much worse than the others (it was taking half an hour for some of the tests to complete where the other 6 cases would take only 2 minutes). We spent some time trying to optimize these. We also spent quite a bit of time fighting with the compiler, since we did not realize that our internal compiler error came from using a specific version of gcc.

The other thing that we did was to make the code work in cases where the the blocks are stored row-wise overall, but where data-storage inside the block is column-wise, or vice versa. We think the multiply will now work with any of the 64 cases, but in the 16 where the overall layout is RRC/CCR, it will be very slow. We also are unable to test with any of them except for the 8 we were originally working on, so it is possible that some of our answers may be incorrect.

Next week we hope to improve the RRC/CCR case to be more scalable and take a reasonable time to run. We think it might be possible to get a substantial improvement by copying multiple elements at a time in the transpose step. Otherwise, we did have another idea for an algorithm, which was harder to implement and involved (number of subviews)² rotates and multiples instead of (number of subviews), but which may prove to be better than our current implementation. We hope that it might also be possible to test the other cases to make sure that they work. They should however be scalable since the algorithm is the same, only the calls to dgemm are different.

The brown bag lecture was by a panel of Texas A&M grad students. They talked about some of the things we should consider when deciding whether to go to grad school as a masters or Ph D student, and talked some about the applications process. It was good to hear the perspective of other students who have gone through all of this a few years ago.

Since Friday was the Fourth of July, we didn't come to work. In the evening some of us went to stand on the top level of the parking garage attached to the Tradition and watch the fireworks. It was pretty nice!

Week Five

This week we wrote code to test all the specializations. To do this we had to copy data into a transposed format to simulate the cases where the partitioning strategy and the data layout within the block were not the same, so we were unable to actually get any data on efficiency. However, we predict that they should be approximately the same, because the only difference would be in what is passed to dgemm. In testing our solutions we found some errors, but we have now corrected them and all specializations give the correct result. The only major obstacle that remains is the CCR/RRC case, which is still very slow compared to the others due to the transpose.

The other thing we did this week was to start on our paper. We have written an abstract and started with some of the sections, and have made a diagram to show how an example algorithm works. We also found out that we may have to give a 10 minute powerpoint presentation next week at the brown bag lunch, so we started to put together a powerpoint presentation. Next week we plan to continue working on the presentation and paper, and also to see how practical it would be to optimize the transpose. There are also a few things in the rest of the code that could be optimized easily, so we will look at them.

The brown bag lecture this week was about preparing for the GRE. I was somewhat relieved to know that the math was not much harder than the SAT, because I had thought that it would be much harder. I do need to start studying, though; it would be good to improve my vocabulary a little and to brush up on junior high and high school math. Also at the brown bag, Surbhi gave her presentation because it was her last week here. It was pretty interesting to hear what she had been working on this summer.

Week Six

This week we worked on the multiply to allow it to work for floats, as well as complex and complex doubles in the future. We also worked on the general case, so that no matter what data is passed to it, it always is able to return a correct result, even if it is very slow. We made some progress but the iterator we are using is incomplete so although we think our code should work, we do not know for sure.

Besides that, we did our presentation to the other CS REU students on Thursday, so we needed to prepare our powerpoint for that and rehearse. Otherwise, we wrote our abstract and worked some on the paper. Mostly we have been trying to master LaTeX (a powerful and professional program to do text layout), so that next week we can finish writing the paper and also design the poster.

The brownbag presentation this week was about what we can expect at the poster session, and general advice from a couple of previous participants. We also gave a powerpoint presentation of what we've been working on. It was stressful but hopefully helped prepare us for the poster session.

Week Seven

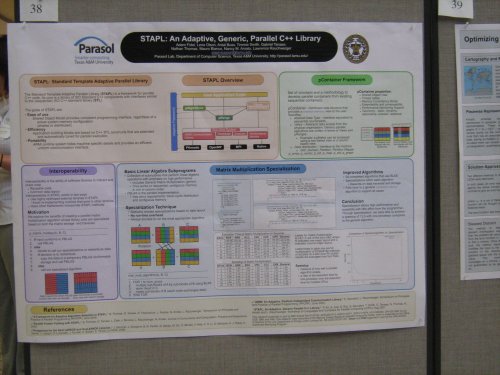

We spent most of this week working on our poster for the poster presentation sessions. We had a template to work from and some of the information on our poster could be directly copied from last year's poster, but it still took a long time. The posters are powerpoint format, just stored as one very large slide, and getting openoffice impress to work was very frustrating. For example, it has an annoying tendency to save the transparency in our boxes of text at 100%, so that instead of having black text on a clear background, we have black text on a solid black box. Impress also crashed a few times. To top it off, we only realized after we were very nearly finished that the poster from last year was not the same dimensions as the ones this year have to be, so we had to eliminate a lot of the information on ours and rearrange things. All in all, this was probably the most frustrating thing I have experienced during my entire time here.

Besides our poster woes, we worked some on our paper. Other than that, we finished implementing the general case using inner product, and now no matter how the blocks are laid out, the algorithm returns a correct result. This also showed how much of an improvement our specializations were over the general algorithm. Next week is the last week for many of the students, so we will have a couple of poster sessions where we will present what we have been working on, and our papers are due. So next week will probably be busy with that.

Week Eight

On Thursday we had the last brown bag session. We saw the presentations from the students who hadn't given them yet, ate pizza, and filled out evaluations of the program. We also got free t-shirts, which is always nice.

On Friday we had the judged poster sessions. I was really nervous beforehand, since it sounded like it would be pretty serious. It turned out that I needn't have worried, though. The judges asked questions, but none of them were particularly difficult, and they seemed to understand the poster. It might have been nice if more other people had come past, because the only people who seemed interested in the posters were the judges. But other than that things went very well, I think. We didn't win, but that's not what matters.

Next week it will be time for some new challenges. Adam will be gone, as will the majority of the other students, so I'll be working on my own. Antal says he has some suggestions for things to work on, so I will probably start with that.

Week Nine

The lab was lonely with all the undergrads gone, and very quiet. Antal suggested that I work on implementing a simulation of light diffraction to see how well the matrix algorithms we'd written would perform. Besides that it was also suggested that I modify the SUMMA algorithm which he had written so that every case would have its own specialization instead of having them all use the same one but with if/else statements. Other than that, I tried to fix the algorithms to use transpose and not-tranpose view-types, but didn't make much progress. Finally, I started working on testing the specializations to SUMMA to see how they performed.

I also moved on Thursday, since the Tradition (the student housing where I had been staying) needed to prepare for the new fall residents. So now I am staying in the hotel attached to the Memorial Student Center, which is somewhat less convenient -- I haven't a refrigerator nor microwave, and there isn't a table so if I want to work from home in the eveing I have to go to the study lounge. It's pretty close though. The other problem was that campus wireless wouldn't talk to my laptop, and after 3 hours at the help desk, I found out to my surprise that while it didn't work in (fully up-to-date) gentoo, it worked automatically in my old fedora partition. But now that's sorted so I will be able to run tests over the weekend.

Week Ten

During my last week in College Station, I worked on timing the specializations for Summa. I encountered a few problems: mainly, sometimes the program never finishes running and just runs out of time and is terminated. Mostly it happens when block size is small and/or there are 32+ processors. It does occasionally work, so it is a strange error. The other problem was that after a commit, the copy version started taking 10 times longer than it previously had, which wasn't ideal. So, my results probably will not be very useful. Instead I am editing a paper in LaTex so that when results are collected, they can be inserted.

I am heading home on Saturday. It's been a great summer, and while I won't miss the weather, I really enjoyed working here with everyone. It's been an interesting experience, and while before I wasn't sure about grad school, now I definitely want to go. If you are a student considering applying to the DMP and reading journals from previous participants to see what they thought (as I did before coming), I encourage you to apply. It's a really great way to spend your summer!