weekly_blog/

..Week 10: 2 August - 10 August, 2010..

Since the official REU program ended at UNCC last week, it's been really quiet here.

Aside from my final report, I worked on integrating more sprites into the game, and on random encounters.

Overall I would say this summer has been a fantastic experience. I've learned a lot about a field I was not familiar with (video game research), learned a lot about the capabilties of cutting-edge technology, and I met a lot of dedicated, passionate, and fun people along the way. I'm sure the next people to spend their summer here will have tons of fun!

..Week 9: 26 July - 30 July, 2010..

This week I have mostly spent on running tests of my battle

system so far, getting user feedback, and using data from survey to

guage how effective audio cues are in a battle system, and

determining whether they help the player become involved in the

game. All of this data will be included in my final report.

We are presenting our work on the 29th, so I have also been working on my final presentation and my poster.

We went out for our final dinner in the REU, to a sushi restaurant. The food was great, but I am sorry the REU will be ending soon!

..Week 8: 19 July - 23 July, 2010..

Two issues I have come across this week: sound panning, and

gesture recognition.

The first has to do with the iPhone's apparent inability to handle 3D sounds; this has meant that, instead of simply moving a single sound around the level in the battle system, in order to create an effect of a sound moving to the left or right (panning), we have had to add two more mono sound effects per sound we wish to pan (one with only the left audio channel, and one with only the right). This way, we play the left-panned sound when we want the player to attack towards the left, and the same for the right sound. While this puts yet another burden on the already taxed iPhone hardware, it seems like it is the only way to achieve the effect we desire.

As for gesture recognition, I have been using a modification of F-test calculations to map user gestures to curves I made by taking the average of several movement tests I performed, with varying degrees of success. The values one gets for up and down motions are so inconsistent that it is difficult to create any sort of curve of best fit for them, never mind compare a player's gesture to their graphs. The left and right values are generally better, and I typically get a recognizable curve from each direction, but the curve I get for both can change based on a variety of factors, such as how the phone is held, and how the phone is swung. As such, even these graphs can be unreliable, and have to be constantly averaged with the graphs I created prior in order to get something that resembles a median of all the tests I have performed. Nevertheless, this is working better more consistently than previous methods, which attempted to determine the direction of the swing based on the parity of the acceleration values. I will work a bit more on this, and next week I will test the system out to see if others find the concept interesting and fun.

In other news, we went to the Nascar Speedpark in Concord Mills. We did laser tag and I played several games of air hockey; afterward we headed down to the theater to watch Inception (which was great)!

..Week 7: 12 July - 16 July, 2010..

Currently we have the background music uncompressed, while

all sound effects are .mp3 files. This sometimes causes memory

errors on the iPhone 3G (newer models seem to have better luck with

handling the sounds), which, while problematic, does not happen

often enough to render the game unplayable. As this seems to be one

of the only solutions to our problem, we will stick with this

strategy for now.

In addition to this, I have also worked on being able to recognize when a gesture starts and ends, and in what direction the gesture was performed. While the left and right gestures can be detected with a fair amount of accuracy, the up and down gestures seem to be rather erratic and do not tend to be picked up by my system. I am going to graph the acceleration values I get from each motion and see if I can map a player's gesture to curves of best fit.

..Week 6: 6 July - 9 July, 2010..

I am now getting the prototype I worked on earlier tested by some people in the lab, and working on incorporating the audio cues into the prototype.

The latter is proving tricky because the iPhone cannot handle multiple uncompressed audio files at once, and yet one must use uncompressed audio files for sound effects (when played on top of the background music), and only uncompressed audio files can be sped up/slowed down (which is important for giving feedback to the player; fast frantic music would indicate low health, etc)! I will try to find a workaround for this; otherwise we may not be able to affect the background music based on what happens in-game.

..Week 5: 28 June - 2 July, 2010..

We have added a Thief class, and now I am working on

accelerometer data in order to get sweeping gestures to work with

the iPhone. This is tricky because the iPhone's accelerometers are

based on tilting and we want them to distinguish between actual

swinging movements. Nevertheless I was able to get a basic

prototype in Unity, that can have the character perform different

moves depending on what direction a player swings the iPhone in.

The interface will look somewhat like this (with a better skin and without the Pokemon):

prototype for battle interface

The player sees the enemy HP and their own, a prompt telling them which way to swing in order to get bonus points, and can choose between whether they use a magic or physical attack. There is also an option to choose a preset item, but as we don't have items yet this does nothing yet.

..Week 4: 21 June - 25 June, 2010..

Our project was approved!

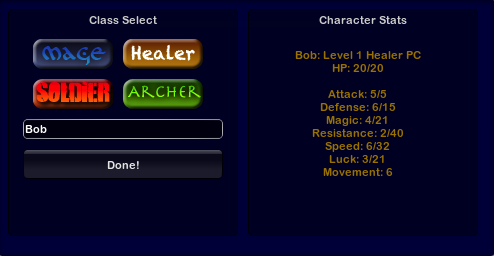

Now we are working on a number of things, including finalizing designs for the game and writing some gameplay code. I made the following to test out how the Player class will work:

character select screen for stats debugging

There are (at the moment) four classes: Mage, Healer, Soldier, and Archer, each which will have different base stats, and who will grow differently. The stats are also generated randomly such that two different players who choose the same class will not grow the same, nor will they start with the exact same stats (although every base stat is capped at ~10, and the max of each stat is also capped at random based on the class type). Above, next to each stat is the base stat value, divided by the max this stat can rise to for this character (the latter of which will be invisible to players). This way players can be encouraged to have multiple characters of the same class, and a player can't try to obtain ridiculously high base/max stats. Users can also enter a custom name.

I'm also doing some research on iPhone accelerometer data, and how it works within Unity.

Outside of the lab, I went with a few people to a Shakespeare play as part of the Collaborative Arts program here in Charlotte. The actors were fantastic and the venue was inspired; I'd definitely go again!

..Week 3: 14 June - 18 June, 2010..

We were given a Game Tutorial Challenge; we are going to

create tutorials on how we did some part of my game and upload it

to our websites. I will be writing mine on how I integrated Wiimote

controls into my game (which was somewhat tricky, as plugins are

not allowed in Unity Indie!).

We were also given a description about what we will be working on this summer; we are going to develop a pervasive audio game that focuses on audio cues (rather than visual cues). It will be called Akademy Kombat, and will require at least two people working together to accomplish certain tasks. We hope the audio cues will make the game more immersive than visual cues, because visual cues tend to distract too much from the physical world. It will be for the iPhone.

My job this week, therefore, is to both help pitch the idea to our mentor, and to figure out how to get accelerometer data from the iPhone so it can tell if the user makes a gesture for the game.

..Week 2: 7 June - 11 June, 2010..

We were given a Game Design Challenge; this meant we had a

week to design and create a game fitting within one of the

following categories (in order of difficultly, starting with most

difficult):

- 2/3D fighter

- Wiimote-Controlled

- Racing

-

Maze

- Social Networking

The fighter interested me, but

since I had never used Unity before I opted for the

equally-interesting Wiimote-controlled option, and decided to

create a 2D platformer where the character is controlled with a

Wiimote. In order to learn the necessary mechanics for such a game

(how to create enemies, cause damage, create a 2D perspective,

create respawn points, create a HUD...), I went through the 2D/3D

platformer and FPS tutorials offered by Unity. I managed to finish,

and will upload playable files soon!

Outside of work, the rest of the REU program played a lot of table games such as Apples to Apples and Catchphrase.

..Week 1: 1 June - 4 June, 2010..

The first day was spent moving into my apartment (which was

on-campus); day two involved presentations meant to give us an idea

of what we would be working on in our perspective labs. I am in the

Games2Learning lab! I created an account on Assembla, a website for

collaborative work on projects that we will be working on this

summer. I also installed an SVN client so I could check out the

SNAGEM project (a social networking game developed by UNCC to

promote social behavior at conferences/schools). As I don't have a

lot of PHP experience, most of the time I spent looking at the code

was understanding how PHP works. I also installed Unity and went

through basic GUI and Scripting tutorials to get a feel for the

program; as I have done work in C# and was told Unity can be

programmed in C#, I learned how to do the tutorials (taught in

Javascript) in C#.